Jetty Monitoring

Monitor Jetty performance seamlessly with the Atatus Java agent, offering real-time visibility into your Jetty HTTP server, servlet engine, and JVM performance. Gain deep insights into request handling, transaction flow, and system health to ensure an efficient and high-performing server stack.

Where Jetty production visibility breaks

Request Handling Ambiguity

Connector configuration and handler chains make it difficult to confirm how requests were actually processed under live traffic.

Fragmented Execution Context

Errors surface without complete thread, request, or execution state, forcing engineers to reconstruct runtime conditions manually.

Slow Fault Localization

Determining whether failures originate in handlers, servlets, or downstream services takes longer as execution paths deepen.

Hidden Thread Contention

Thread pool saturation and scheduling delays develop gradually, remaining invisible until latency and error rates spike.

Dependency Timing Gaps

Upstream and downstream dependencies introduce delays that are difficult to correlate with Jetty request handling behavior.

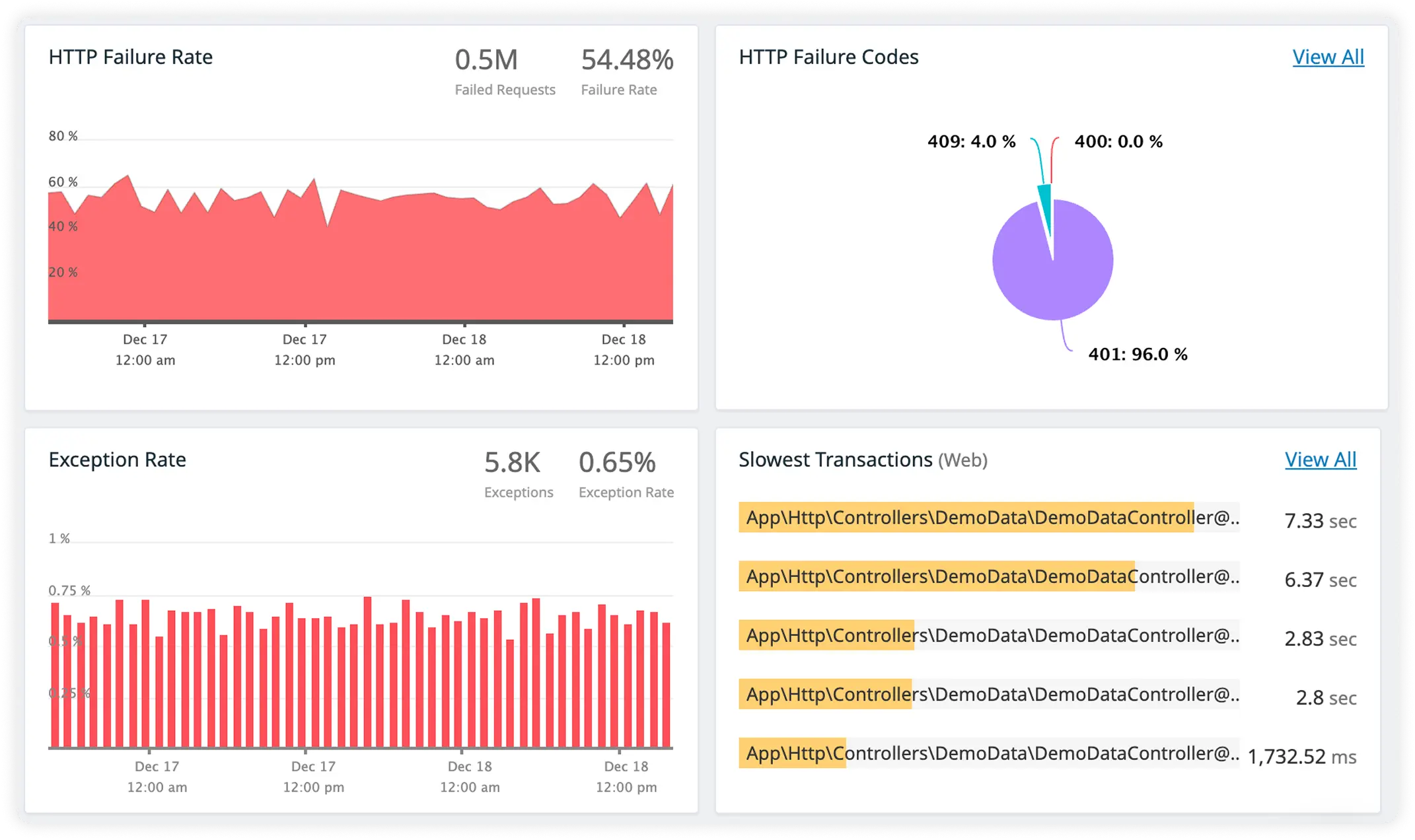

Noisy Failure Signals

Alerts lack execution depth, pushing teams to respond to symptoms rather than the underlying processing breakdown.

Unclear Scale Dynamics

Rising concurrency and connection counts alter runtime behavior in ways teams cannot clearly observe or predict.

Declining Operational Confidence

Repeated blind investigations reduce trust in production understanding, slowing decision-making during high-impact incidents.

Complete Performance Visibility for

Jetty Applications

Real-time observability for Jetty workloads that helps teams understand request processing, manage concurrency, and optimize server performance in production.

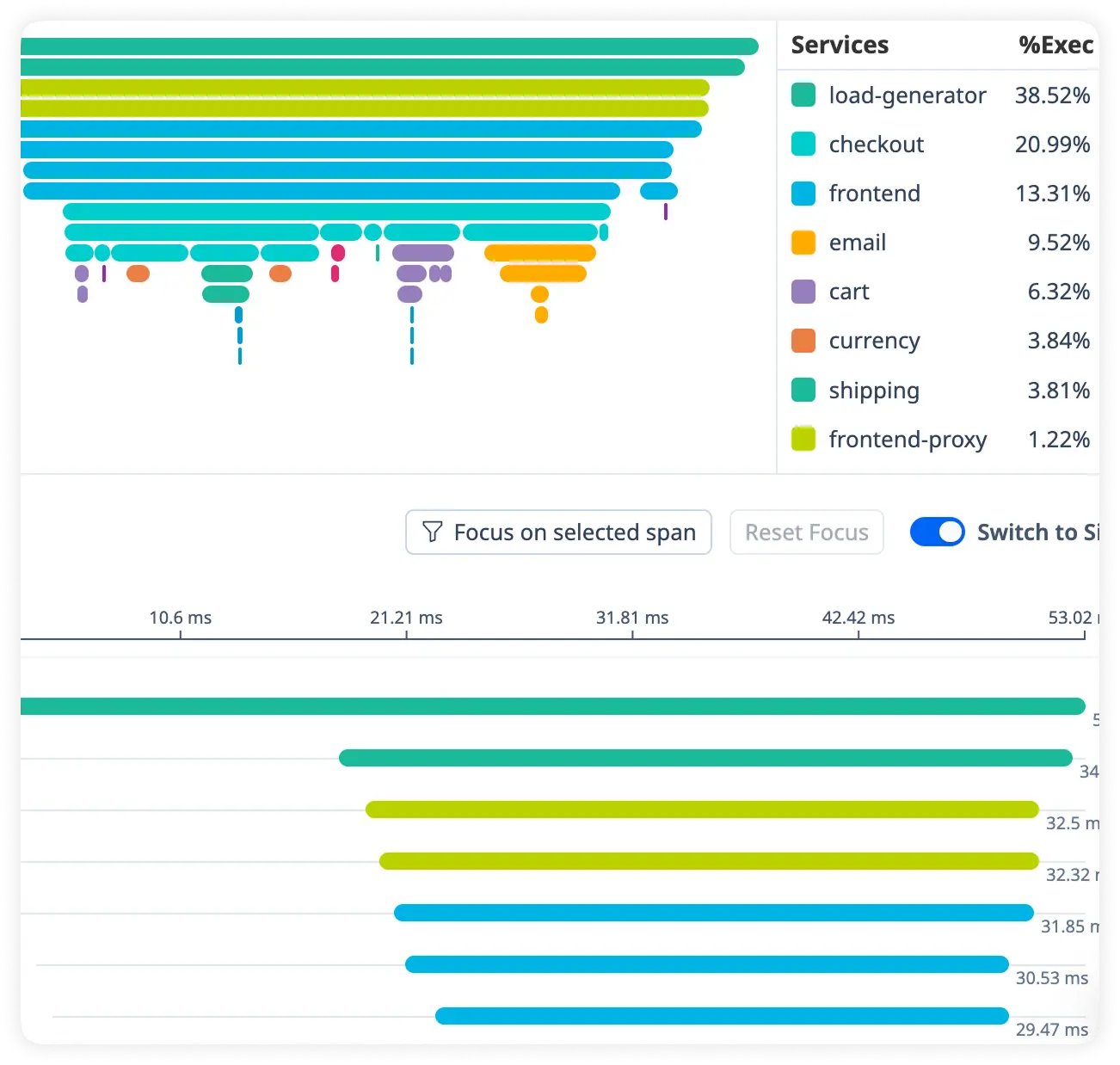

Trace-Level Visibility for Jetty Requests

Track every incoming request across handlers, threads, and backend services to identify processing delays and performance bottlenecks quickly.

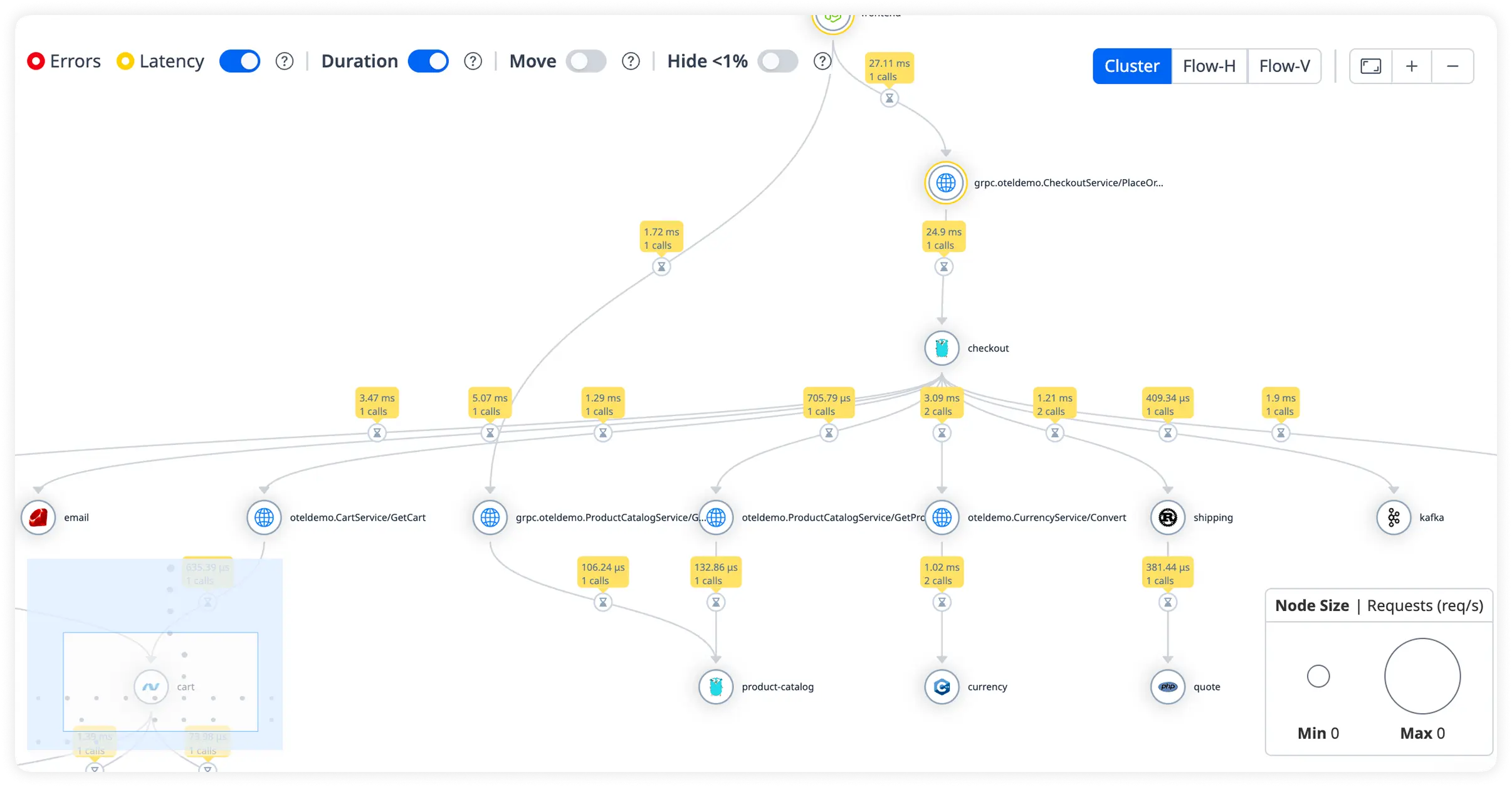

Visualize Server Dependencies

See how Jetty connects with databases and APIs using live latency metrics and traffic patterns to optimize system performance.

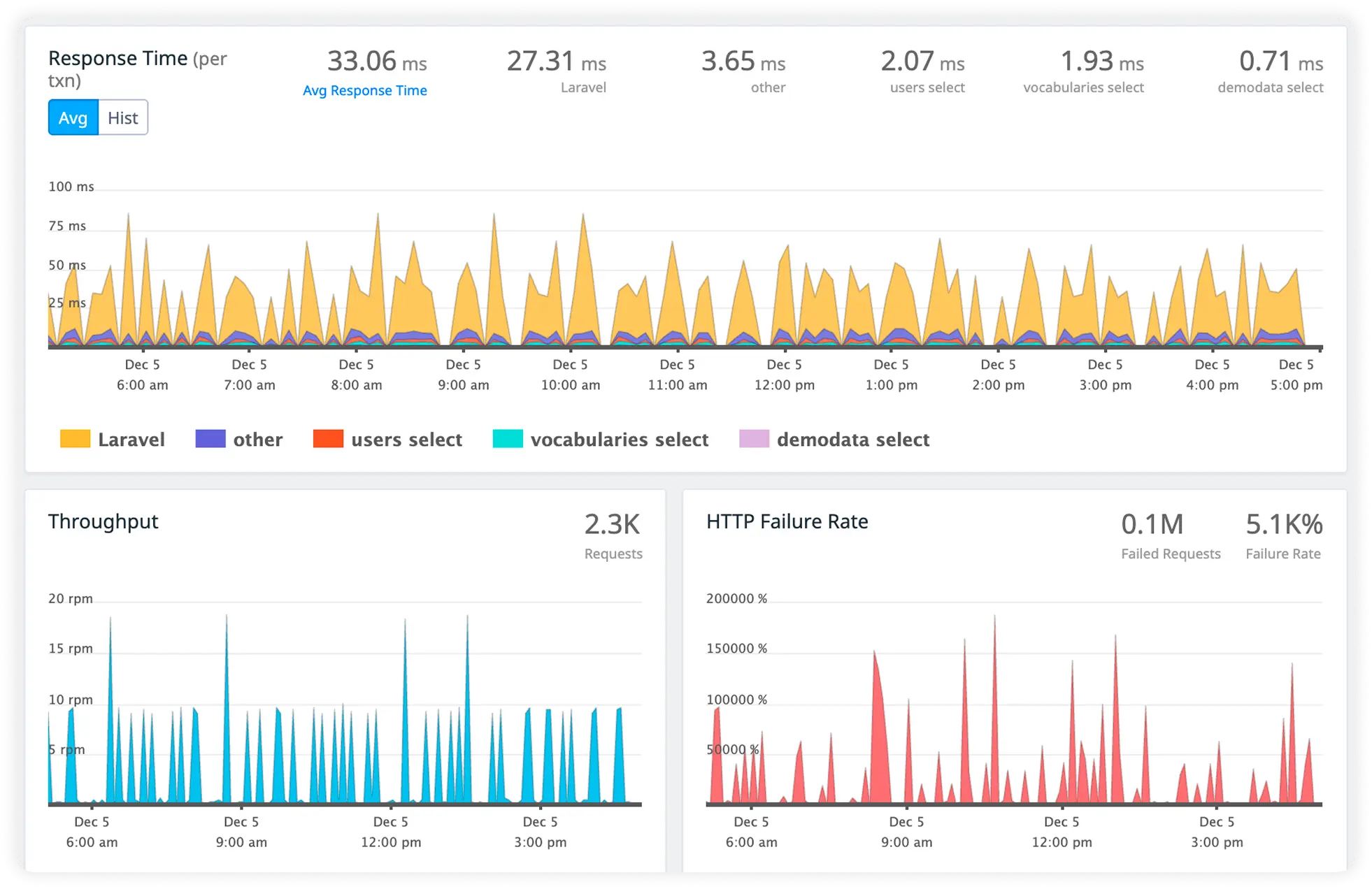

Monitor High-Traffic Transactions

Identify slow sessions and request spikes that impact server responsiveness and user experience.

Track External Calls

Monitor downstream service latency and failures to maintain application stability and reliability.

Why Jetty teams standardize on Atatus

As Jetty deployments scale in traffic, concurrency, and ownership, understanding real production behavior becomes harder than operating the infrastructure itself. Teams standardize on Atatus to preserve execution clarity, align engineers around the same runtime reality, and maintain confidence as system complexity grows.

Clear Execution Flow

Engineers understand how requests move through handlers, threads, and execution stages without reconstructing Jetty internals.

Fast Team Alignment

Platform, SRE, and backend teams reach shared production understanding quickly, even during high-severity incidents.

Immediate Signal Confidence

Production signals are trusted early in investigations, enabling faster and more decisive response.

Lower Debug Overhead

Engineers spend less time correlating logs and thread behavior and more time isolating execution faults.

Predictable Incident Response

Incident handling follows consistent analytical patterns as traffic volume and system complexity increase.

Shared Runtime Reality

Teams reference the same execution evidence during outages and post-incident reviews.

Stable Under Concurrency

Production understanding remains intact as connection counts and parallelism rise.

Reduced On-Call Fatigue

Clear runtime insight shortens incident duration and reduces escalation loops for on-call engineers.

Long-Term Operational Trust

Teams continue scaling Jetty-based systems without fear of unseen production behavior.

Unified Observability for Every Engineering Team

Atatus adapts to how engineering teams work across development, operations, and reliability.

Developers

Trace requests, debug errors, and identify performance issues at the code level with clear context.

DevOps

Track deployments, monitor infrastructure impact, and understand how releases affect application stability.

Release Engineer

Measure service health, latency, and error rates to maintain reliability and reduce production risk.